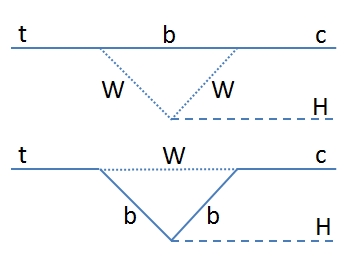

The question is a legitimate one since the top quark has a mass 40% larger than the Higgs, so in principle a decay could be allowed. For instance, one could imagine that the top "fluctuates" into a bottom quark - W boson combination, then that the W boson emits a Higgs particle, and finally the bottom quark and W boson fuse themselves into a charm quark. Or, once the top fluctuates into a Wb pair, it is the bottom quark which emits the Higgs boson before rejoining with the W creating a charm quark. The diagrams are shown below.

Such processes must have a very small probability of actually taking place: in the standard model the prediction is a branching fraction in the 10^-15 range - the so-called "don't even bother" zone. This is due to the fact that there is a natural cancelation of the contribution of different quarks in the virtual diagrams that must be considered together.

However, one might imagine exotic ways that could greatly enhance the rate of these processes; exotic particles hiding in the quantum loop might be extremely massive and yet still influence significantly the probability of the decay, as they would be free from the constraints due to the Glashow-Iliopoulos-Maiani mechanism.

So it is a good idea to look for the rare decay t->ch in events featuring the production of a top-antitop quark pair, which are very frequent at the LHC (in 2012, we produced them at rates of one every few seconds). And it is actually fun, too: one may study the decay of the Higgs to two photons, and get a very clean sample of data to study, where one looks for multiple mass peaks - a top-like mass peak in the invariant mass distribution of photon pairs plus a jet, a top mass peak produced by three other objects created when the other top quark decays into Wb-> jjj or Wb->lνb (respectively, three jets or a lepton, a neutrino, and a b-jet), and of course, a Higgs mass peak from the two photons. If you are a particle physicist, that's about as much fun as you can expect to get from particle searches!

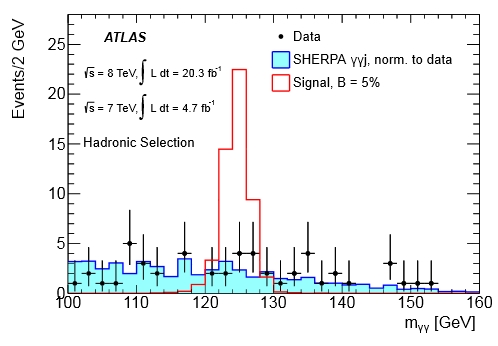

Atlas did precisely that, using data from the 2011 and 2012 runs of the Large Hadron Collider. As a sampler, have a look at the mass distribution of event candidates in the "all-hadronic" final state - the one where besides the two photons the event features four jets, so that one combines one of the jets with the photons to get a top candidate, and the other three to get the other top.

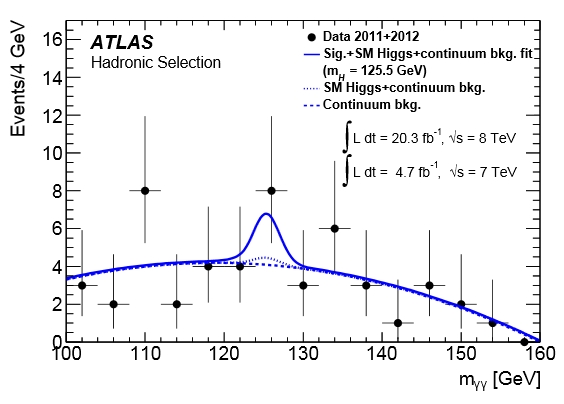

As you can see, the H->γγ decay signal (here in red, shown hypothesizing a branching fraction of top to charm-Higgs of 5%) would stand up clearly over regular standard model processes (cyan histogram). In fact, one can use a data-driven background shape to fit for a signal contribution, obtaining a small, insignificant signal, as shown below. Once combined with the search in the other decay mode of the top quark not going into Higgs (single-lepton), the result becomes an upper limit on the t->qH decay at 0.79%. A remarkably tight bound!

Note that the graph shows two background shapes. The finer dashing shows the result of including the estimate for regular standard model Higgs production, which should contaminate the data sample through ttH, tH, WH, and other Higgs-related processes. Their combined effect is small, but it has been taken into account in the fit to the exotic signal contribution.

Also note a disturbing feature in the ATLAS way of plotting experimental data in the above graph: all data points have an error bar except the rightmost one at 155 GeV. This seems to imply that their estimate of the interval which covers possible true values of the average event counts in each bin collapse to a zero-length interval when they observe zero event counts. That is of course mistaken - the data point at zero should have an upper error bar extending all the way to 1.84 events.

Despite that minor blemish, this is a great new result from ATLAS. Recently I have seen the two experiments across the LHC ring challenging one another on ways to squeeze as much information as possible from their datasets as far as Higgs boson phenomenology is concerned; of course, the recent CMS result on the Higgs boson width comes to mind in this respect. So, kudos to ATLAS for this nice new result, waiting for the next shot by CMS!

Comments