Plagiarism, using others' work but putting it forth as your own, has been a problem since folks started putting pen to paper (or chisel to stone). Even stalwart heroes like Helen Keller and Martin Luther King, Jr. have been accused of the big P. But pretend you're an editor at a scientific journal - how are you supposed to know, without spending a lot of time, if the submitted article you're reviewing has taken chunks from an article published in a competing journal? Enter CrossRef's CrossCheck, a service that uses iParadigms' iThenticate plagiarism software.

CrossCheck is a growing database - 83 publishers have submitted 25.5 million articles from 48,517 journals and books, according to Nature News. Members can use iThenticate to compare articles in the database for similar content. My old employer, Elsevier, is one of the participants in the database.

Nature News says that the software is catching a lot of plagiarists in the act:

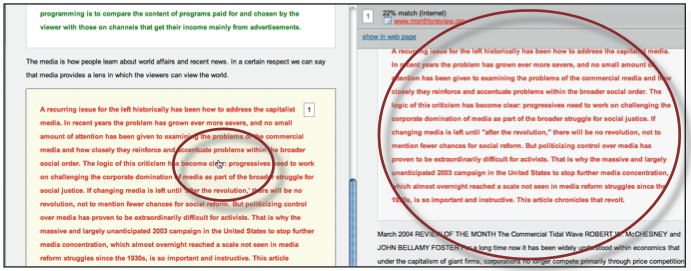

As publishers have expanded their testing of CrossCheck in the past few months, some have discovered staggering levels of plagiarism, from self-plagiarism, to copying of a few paragraphs or the wholesale copying of other articles. Taylor and Francis has been testing CrossCheck for 6 months on submissions to three of its science journals. In one, 21 of 216 submissions, or almost 10 percent, had to be rejected because they contained plagiarism; in the second journal, that rate was 6 percent; and in the third, 13 of 56 of articles (23 percent) were rejected after testing, according to Rachael Lammey, a publishing manager at Taylor and Francis's offices in Abingdon, UK.The service isn't free, obviously, but given the time saved in an editor searching every single article known to mankind (or not doing it at all and hoping a retraction isn't looming) versus having a software program doing it for you is money well spent, I'd say. There are other ways of searching - a group out of UT-Southwestern used the online Déjà vu database. Here is an example of one paper that appears to be almost entirely plagiarized from work by a different author:

Two original paragraphs and two tables? That's all you have for original work? Ouch. The problem with the Déjà vu experiment was that the suspect papers were publicly posted. That raises a number of red flags, especially if the so-called plagiarism is a false positive. The iThenticate User Manual makes the excellent point that just because a similarity is detected, doesn't mean that it is plagiarized, just that the content is similar:

The similarity indices do not reflect iThenticate’s assessment of whether a paper has or has not been plagiarized. Similarity Reports are simply a tool to help our clients find sources that contain text similar to the submitted documents. The decision to deem any work plagiarized must be made carefully, and only after an in depth examination of both the submitted paper and suspect sources.This is important - maybe you're quoting a source, so of course you're going to have the same text. And use of text from certain portions of a scientific paper are less concerning than others, Elsevier's director of journal services Catriona Fennell says in the Nature News article. "Self-plagiarism of materials and methods can sometimes be valid, for example, says Fennell. 'There are only so many different ways you can describe how to run a gel,' she says. 'Plagiarism of results or the discussion is a greater concern.'"

An example of the output from the user manual shows how text is color-coded, making it easy to compare your text with that of another article:

I think this could potentially be a great tool for editors, not just of scientific journals but of all publications - used carefully and judiciously, of course. A nice feature of the Internet is that bloggers can link back to articles and sources, and if iThenticate can search all blogs and news sites, perhaps Jayson Blair's editor could have caught the plagiarism before it blew up in the NY Times' face.

----------------------------------------------------------------

Source: "Journals step up plagiarism policing." Declan Butler, Nature News, July 5 2010.

Comments