Funding for institutions of higher education in Norway is partially determined by how many publications their employees produce. While there are already six troubling problems with this system, one of them is about to get much, much worse.

A group of experts was commissioned by the Ministry of Education and Research to suggest improvements in the financing system. Their report includes one proposal that is not only mathematically garbled; it is also an incentive to corruption.

Here’s how it works: In our current system, an individual publication earns a certain number of points. For example, an article in a peer reviewed journal earns one point while and article in a really good peer reviewed journal earns three. These two options are dubbed “Level 1″ and “Level 2.”

Rewarding co-authorship

The new proposal affects the division of points among multiple authors. Currently, the points earned by an article are divided equally between authors. Four co-authors of an article appearing in a Level 1 journal see the one earned point divided equally among them, such that each earns 1/4 points for the article.

The experts propose a different strategy for rewarding co-authorship. Instead of dividing the point(s) earned by the number of authors, they propose dividing it by the square root of the number of authors.

In the new system, those same four researchers who co-author a Level 1 article will each be awarded not 1/4 points, but rather 1/√4 points, i.e. 1/2 points. The article thereby generates a total of two points, since each of the four authors gets credited with 0.5 points.

But a Level 1 article is supposed to just get one point so it’s hard to know how to reconcile this proposal with a formula that in this case gives the article two. The experts simply leave us hanging here; it’s impossible to know what they mean. Maybe they mean that Level 1 articles earn at least 1 point and Level 2 earns at least 4 points (they want to up it from 3). Who knows?

When publishing pays — and pays well!

Of course, it’s not just the confusion about whether an article gets one point or two that is the problem. The total number of points associated with a particular article is proposed to become a function of the number of authors. If my research lab gets money from the university for the points our publishing generates, what’s the smart strategy? I put the name of everyone at the lab on every article!

If I write a Level 1 article myself, the team gets one point. But if I add 8 of my colleagues to the list of authors — and, hey, we’re all working together here — then each of us gets 1/√9 points, i.e. 0.3, which means that in total we get credited with 9*0.3 points, i.e. 2.7 points. In recent years, points have been worth about $3,000, so co-authorship in this way adds a few grand to our group’s budget.

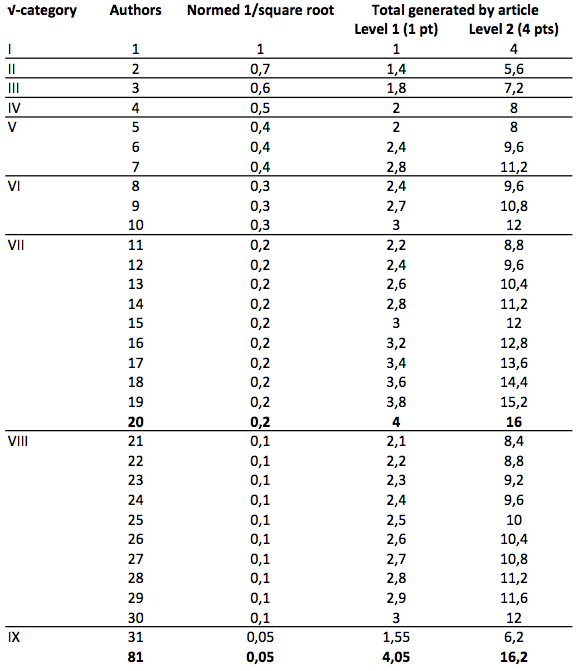

In their wisdom, the experts concede that this is complex and they therefore propose using only nine different multipliers instead of an infinite number — a move they describe as a “simplification!” The chart here shows the total points an article generates, as a function of which of the nine √-multiplier categories applies, the number of authors, and whether it is Level 1 or Level 2.

The stated intention is to strengthen fields that have a tradition for co-authorship, namely the natural sciences and medicine. Since the total amount of money generated by these points in the national system is fixed, this also entails a weakening of the subjects without a tradition for co-authorship, namely the humanities and social sciences.

The proposal also weakens Norway’s university colleges — already engaged in a yeoman’s effort to improve their research profiles — since they also tend to focus on fields with weaker traditions for co-authorship.

As you can see in the chart above, 20 is the optimal number of authors, generating a total of 4 points for a Level 1 article and 16 for Level 2. To earn more, you have to list 81 authors, which is uncommon everywhere but in physics. Indeed, in my field (generative phonology), there may not be 81 of us at all.

Corruption and leadership

The experts claim their modifications should lead to enhancements in quality. Maybe that’s buried in here somewhere, but what is perfectly clear is that they are rewarding quantity — specifically, quantity of authors. The more authors on an article, the more points (=money) it generates.

Where there’s money, there’s the potential for corruption. The square root system will lead to an explosion in the number of points generated nationally and to the appearance of more guest authors.

Policy should be evidence based — all the more so for research institutions. There’s plenty of research on the effects of bibliometrics but we don’t find it present in this report. Systems of accountability that infer the quality of individual articles as a function of some fact about the journal have been repeatedly demonstrated to be unreliable.

The square root approach — even in its “simplified” version — is far too complex for people to remember and will therefore lead to little more than increased opacity and frustration. Good leadership requires transparency and this proposal should therefore be immediately abandoned.

If our Minister wants to reward international collaboration, there’s an easy and coherent way to do it: divide the point(s) by the number of authors in the Norwegian system.

Financing systems are legitimate tools for Ministers as they try to motivate behaviors leading towards the government’s political goals. But they must be coherent, simple, transparent and fair. The square root proposal is none of these.

This article was originally published on Curt Rice - Science in Balance. Read the original article.

Comments