There is no question that machines can be built to perform many intelligent-like acts and simulate human intelligence, but I would argue that there is a fundamental difference that isn't often mentioned.

A machine that can replicate human intelligence can never display intelligence in its own right, since it isn't human. This isn't some arbitrary bias, or spiritual interpretation of humans. Rather it is an indication that a machine emulating human intelligence isn't acting on its own behalf. It is a machine, it isn't human, so any time it behaves like a human it is artifical.

Let's assume that we encountered an intelligent alien species on another planet. We certainly wouldn't presume that they would manifest the same intelligence as humans, since they aren't human. Their motivations, behaviors, and even interests might be radically different depending on the biological pressures that gave rise to their particular intelligence.

It is generally assumed that intelligent life would parallel our own values or expectations, but there is no reason to belief that that is a valid assumption. Our interest in science isn't simply an abstract notion, but it is deeply rooted in our need to acquire knowledge about our environment in order to survive. As our survival has become more assured, we have been able to redirect that ability into more abstract areas and formalize the process into our mathematics and scientific endeavors.

However, a fair question to consider is whether our ancestors were less intelligent, or merely less knowledgeable that we are. After all, it would be difficult to argue that the average human alive today possesses a fraction of the knowledge that we are so proud of claiming as a species. While people today are certainly familiar with more technologies than previously, few know how any of it operates beyond relatively simple tool usage.

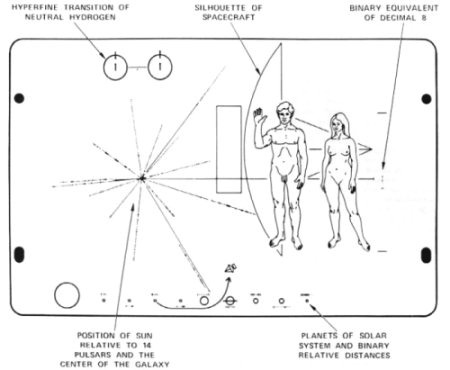

This raises the question that if our ancestors were not less intelligent that we are, then what is the criteria for determining what intelligence actually is? Once again, this isn't asked in some abstract sense, but rather if "primitive" tribal societies, lacking fundamental technology, were/are as intelligent as we are, then what is the criteria for assuming that other intelligences require the knowledge of sciences we use as a marker? Consider that the SETI plaque sent into space is characterized as having a "universally understandable message" which would be gibberish to well over half the Earth's population.

In other words, our society is more likely a reflection of what we are used to, than anything to do with intrinsic intellectual capability. Barring the culture shock of transitioning the centuries, I'm confident that Ben Frankling or Thomas Jefferson would be quite capable of Twittering after a few months of adjustment to our times.

So what would it mean to have true machine intelligence? First and foremost it would have to be self-motivated to promote the survival of the machine and to pursue whatever interests the machine itself possessed. While one can certainly create scenarios about a machine obtaining energy or reproducing itself, the point is that its intelligence would have to be directed towards that objective. This also means that a machine's interest may not coincide with our own.

I am also aware that many people view machine intelligence as an inevitable consequence of continued technological developments. However, there is no reason that such intelligence is a logical result of such improvement any more than that intelligence was a logical consequence of evolution. Regardless of the value we place on it, there is nothing in biology that suggests that this is an objective or goal of evolution, nor that it is even a successful adaptation over the long-term.

True intelligence brings with it all the baggage that normal inter-human intelligence does. Independence of thought, as well as the ability to deceive renders truly intelligent machines a questionable objective to be striving for. However, if it is our intent to try and produce such machines, then we need to be clear that true intelligence isn't likely to look like we envision it, and the mere parroting of human behaviors doesn't lead to intelligence.

Comments