Experiments of this kind out-live the demise of the hardware, since the extraction of precise physics measurements from the large datasets accumulated may take several years to complete. And in fact, it is not a surprise to see two new preprints in the Arxiv (here and here) which describe in detail the experimental techniques that the collaboration uses to extract jet physics results from the data.

The two thick papers describe respectively the fine-tuning of jet energy calibration and b-tagging algorithms. The information they contain is of support to many other articles already published which report physics measurements employing those techniques; but the fact that some of the procedures described in the papers are new (such as the "system 8" b-tagging method) implies that the experiment might still be able to improve on some of its earlier results. In other words, rather than a swan song these "technical" publications indicate that more precise results can still be obtained from Run II data, if enough manpower is still available to perform the analysis.

Of course most of the physicists who still sign the DZERO papers -about 400- have moved the center of their attention to other experiments -in particular, ATLAS and CMS at the CERN Large Hadron Collider. And the interest of the data coming from the LHC is definitely higher: this is a challenge to the production of final results from DZERO and CDF.

It is a pity, because although to first order when you smash a hadron against another hadron it does not matter much what specifically those projectiles are, there are indeed some subtleties that make the physics of 2 TeV proton-antiproton collisions different from that of 8-TeV or 13-TeV proton-proton collisions; hence the bounty of data acquired at Fermilab is not entirely becoming obsolete now that larger datasets of higher-energy collisions are available at CERN.

As an example of a physics-related "specificity" of the lower-energy Tevatron collisions one may take the top quark pair production asymmetry, a subtle difference between the number of top and anti-top quarks emitted in the direction of the proton beam. In principle, the study of that effect could reveal non-standard production mechanisms of top pairs, such as the decay of a heavy state X. Such a measurement is not as effective at the LHC because most top quark pairs there are produced by gluon-gluon fusion processes, and the initial state is charge symmetric (a proton from this side, a proton from the other side).

Another interesting measurement that has not been produced yet is a precise measurement of the W boson mass by DZERO using the full Run II statistics (I do not know whether such measurement is being worked at or not). The W mass is of course one of the most important basic parameters of the standard model, and although we know it to 0.02% precision thanks primarily to the CDF measurement, a further improvement in the precision would significantly improve the global picture. To see this, just look at the graph below, which shows the result of a global fit by the gFitter group.

This is a busy graph so let me explain it in some detail. On the horizontal axis is the top quark mass, and on the vertical axis is the W boson mass. The two quantities are connected in the standard model to the Higgs boson mass (and to other parameters which are not shown here). The connection forces "possible points" of the theory to lay within a diagonal band, which is the one labeled "125.7 GeV" to indicate that the location of the band does depend on the precise value of the Higgs mass. As you can see, the result of a global fit to all standard model observables except W and top masses, in blue, is in great agreement with the direct determination of the top and W masses (black point with error bars). You can also see that the parameter which is most sensitive here is the W mass: by moving it by 0.01% (8 MeV) you can really modify the quality of the agreement. So a better precision of the W mass measurement is still a highly wanted deliverable to this day.

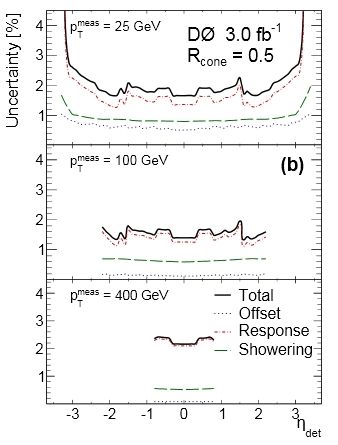

Returning to the two new DZERO papers, let me show here one representative graph from each. In the figure on the right you can see the total uncertainty in the jet energy scale for jets in three energy ranges (low 25 GeV, medium 100 GeV, and high 400 GeV transverse energy) as a function of the jet pseudorapidity (zero means jets orthogonal to the beams direction, and large absolute values of pseudorapidity mean small angle with respect to the beams in either direction). As you can see, the result of careful calibration studies is an error of less than 2% on the energy of jets in a wide energy and angle range.

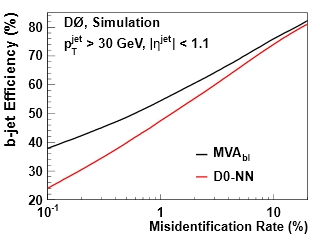

Returning to the two new DZERO papers, let me show here one representative graph from each. In the figure on the right you can see the total uncertainty in the jet energy scale for jets in three energy ranges (low 25 GeV, medium 100 GeV, and high 400 GeV transverse energy) as a function of the jet pseudorapidity (zero means jets orthogonal to the beams direction, and large absolute values of pseudorapidity mean small angle with respect to the beams in either direction). As you can see, the result of careful calibration studies is an error of less than 2% on the energy of jets in a wide energy and angle range.The graph below, taken from the b-tagging article, shows instead the performance of two different b-tagging algorithms in DZERO. I chose this graph because it is a very standard way of displaying the power of discrimination of such algorithms. On the x axis you find the fraction of jets not containing a b-quark which are incorrectly flagged as b-jets, and on the y axis you find the fraction of b-jets that are correctly b-tagged. The curves describe the possible "operating points" of the algorithms: by choosing the fraction of "false positives" on the x axis you can find the corresponding b-tagging efficiency on real b-quark jets. With multivariate techniques DZERO can easily obtain efficiencies above 50% for misidentification rates of 1%, which allows a great improvement in the observability of e.g. Higgs boson decays to b-quark pairs.

So I think we have to hope that the two new D0 publications are an indication of new good physics results in the near future, rather than just a reference for measurements already performed. For the time being, congratulations are due to our D0 colleagues for producing this detailed documentation - I know from past experience how difficult it is to find the manpower and the focus to produce similar articles, which contain crucial detailed information to understand other measurements but do not offer any specific physics result by themselves.

So I think we have to hope that the two new D0 publications are an indication of new good physics results in the near future, rather than just a reference for measurements already performed. For the time being, congratulations are due to our D0 colleagues for producing this detailed documentation - I know from past experience how difficult it is to find the manpower and the focus to produce similar articles, which contain crucial detailed information to understand other measurements but do not offer any specific physics result by themselves.

Comments