Working around high levels of pesticides may translate into a high risk for heart trouble later, a new study suggests …. Compared to men who had not worked around pesticides, those who had the greatest exposure had a 45% higher risk for heart disease or stroke, researchers found.

The study in question, conducted by researchers in Hawaii, made use of a long-standing cohort of 7,557 middle-aged Japanese-American men to examine the association between pesticide exposure and cardiovascular disease. The cohort had been enrolled as part of the Honolulu Heart Program in 1965-1968 and was followed for up to 34 years to 1999.

The authors conducted two analyses of the association between pesticide exposure and risk of cardiovascular disease, after 10 years and 34 years of follow-up. In the abstract to the paper, they reported that there was a positive association between a high level of pesticide exposure and risk of cardiovascular disease (CVD) in the first 10 years of follow-up. They concluded:

These findings suggest that occupational exposure to pesticides may play a role in the development of cardiovascular diseases. The results are novel, as the association between occupational exposure to pesticides and cardiovascular disease incidence has not been examined previously in this unique cohort.

Like all reports of epidemiological findings, this paper needs to be examined carefully to see what information the researchers had, how exposure was defined, how the data were analyzed, and how convincing the results are. Cardiovascular disease is the leading cause of death in the US, and any new environmental risk factor for the disease, if prevalent in the general population, would be of great importance for public health.

But, as soon as I got into the actual details of the study, I realized that the reported association between pesticide exposure and CVD can only be sustained by failing to subject the data to critical analysis. In fact, there are many glaring deficiencies in the data, in the analysis, and in the conclusions.

Inadequate sample size

The cohort consisted of 7,557 men and was followed for up to 34 years. However, only seven percent of the cohort was exposed to pesticides (561/7557). Thus, 93 percent of participants had no occupational exposure to pesticides. Over the course of the study, 2,549 new cases of CVD were diagnosed in the cohort. The researchers attempted to measure the association between different levels of exposure to pesticides with CVD. However, when participants are partitioned by level of pesticide exposure and disease status, the data become very sparse and the risk estimates unstable.

Overly broad disease outcome

The authors originally wanted to examine the association between pesticide exposure and two different outcomes – coronary heart disease and stroke/cerebrovascular disease. However, due to the sample size problem, they were only able to analyze these two diseases in combination. While the two diseases have a number of common risk factors, other risk factors differ and the natural histories of the diseases differ, and analyzing the two together muddies the specificity of the disease being investigated.

Scanty exposure information

In a study like this, the quality and specificity of the exposure information is crucial. However, the authors failed to give the reader a clear description of the available information on pesticide exposure. They said that at entry into the study, in the mid-1960s, participants were asked about their occupation. I had to consult a 2006 paper to find the following: “Occupational exposure information collected during exam I (1965) was used in these analyses. Participants were asked questions about their present and usual occupation and the age that they started and finished working in these occupations.”

From the present study, we learn that the authors used a “scale” to assign a level of intensity of pesticide exposure for each usual occupation of each participant. But we learn from the 2006 paper that the scale, taken from the Occupational Safety and Health Administration (OSHA), estimated “potential for exposure,” not actual exposure. In addition to pesticides, exposure to “metals” and “solvents” was also calculated for each occupation. Exposure for each substance was classified into three categories: no exposure, low-moderate exposure, and high exposure.

It is important to stress that in the Honolulu Heart Study, information on pesticide exposure was self-reported and derived solely from the “usual occupation.” Participants were not asked any questions about whether they worked with pesticides on the job, and if so, what family of pesticides or what specific pesticides. This is not surprising since the Honolulu Heart Study was not designed to examine exposure to pesticides.

However, given that the authors used occupation as an indicator of potential pesticide exposure, it is surprising that they did not even present a breakdown by occupation, as one would expect. Furthermore, after assigning a level of exposure to pesticides, metals, and solvents, they did not present data showing how many participants were exposed solely to pesticides, or to different combinations of the three substances. The authors acknowledged that many participants had multiple exposures.

Finally, the authors made a choice to divide those with exposure into “low-moderate exposure” and “high exposure.” What is strange is that there are only 110 participants in the “low-moderate” exposure group, whereas there are four times as many (451 participants) in the “high” exposure group. The authors could have created exposure groups that would have been more balanced in terms of the number of participants. We will see that this becomes an issue when they present their results.

What do the results really show?

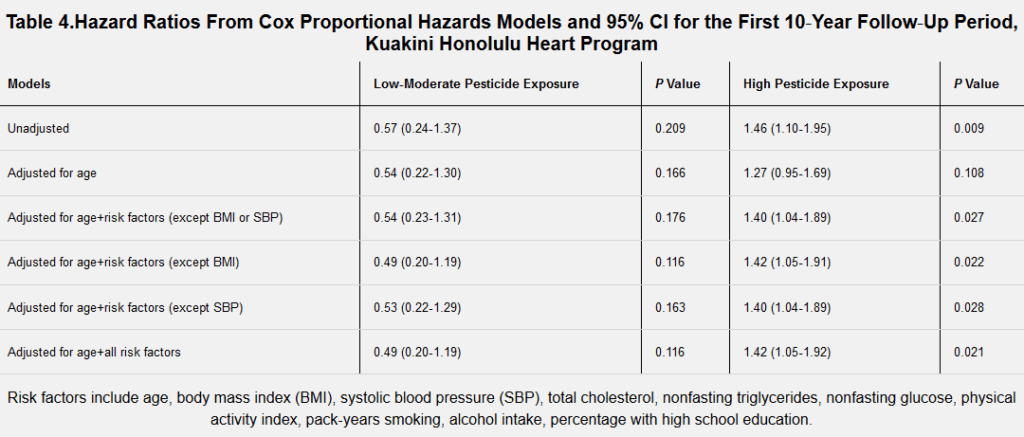

The main results are given in Tables 3 and 4 and in Figures 1 and 2 of the paper. The positive association between pesticide exposure and cardiovascular disease was observed only in men with “high” exposure and only in the analysis using 10-year follow-up – not in the analysis using 34-years of follow-up. As seen in Table 4, the estimate of risk in the high exposure group, compared to the no exposure group, was 1.42 (95% confidence interval 1.05-1.92).

This means that “high pesticide exposure” is associated with a 42% increased risk of CVD relative to no exposure. This is a modest increase in this type of study. But also notice that the estimate is barely above the cutoff for statistical significance. If the lower bound of the confidence interval were 1.00 instead of 1.05, the risk would no longer be statistically significant. Testing for statistical significance permits the researcher to state whether a result is unlikely to have occurred merely by chance. By convention, a five percent level (one out of twenty tries) or less is accepted as indicating that the result is unlikely to have occurred by chance.

A striking feature of Table 4 is that the low-moderate exposure group has a reduced risk of CVD and the reduction is roughly 50%. This reduced risk is not statistically significant, but there are only 110 participants in this exposure category (representing only 1.4% of the cohort!) and only 5 cases of CVD. Going back to a point made above, if the authors had created more balanced exposure groups, the reduced association in the low-moderate group might have achieved statistical significance.

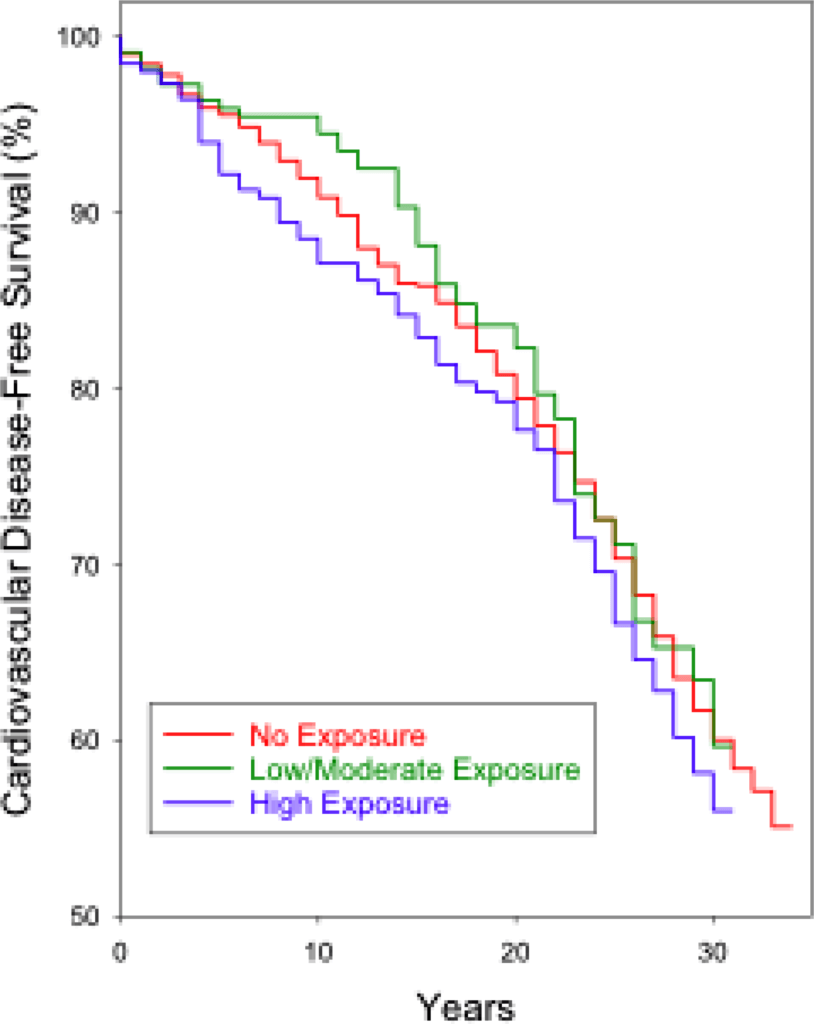

If the pesticide exposure variable is truly associated with CVD, we would expect to see some evidence of a dose-response relationship between exposure and disease. In other words, we might expect to see a slight indication of increased risk among moderately exposed individuals and a greater risk among heavily exposed individuals. The inverse association seen in the low-moderate group poses a problem, and, as seen in Figure 2, after 10 years of follow-up, the reduction in risk in the low-moderate group is greater than the increase in risk in the “high” exposure group.

Image: Figure 2 from the study.

Image: Figure 2 from the study.If the low-moderate and high exposure groups had been more balanced in terms of the number of subjects, it is very likely that the risk estimate for the high exposure group would no longer be statistically significant. This raises the question of whether the researchers created the categories in order to achieve statistical significance in the high exposure group. In any event, they nowhere discuss why they created such numerically imbalanced exposure groups.

If the researchers had presented results of the contrast between “any occupational pesticide exposure” versus “no exposure” – a common way to examine data of this kind – the risk would have been smaller and, likely, not statistically significant.

A confounding problem

Both coronary heart disease and cerebrovascular disease/stroke are classic multifactorial diseases, with numerous risk factors, including smoking, body mass index, cholesterol, blood pressure, and physical activity. Any analysis that examines environmental exposures has to do a careful job of controlling for these personal risk factors in order to provide credible evidence for a novel risk factor. But given the sample size problem in this study, and the very poor quality of the exposure information, adequate adjustment for the many potential confounding factors represents a formidable challenge.

Another point should be mentioned. Because the authors only used the baseline information, they did not take into account changes in risk factors, such as smoking or blood pressure that occurred during follow-up. Thus, if a smoker quit smoking 5 years after enrollment (thereby reducing his risk of CVD), that person would continue to be classified as a smoker throughout the study.

In order to fully appreciate the deficiencies of the Honolulu Heart Study to address the question of the association between pesticide use and cardiovascular disease, it is helpful to compare that paper with a study that was designed to investigate the health effects of pesticide exposure.

In the mid-1990s, the National Cancer Institute launched the Agricultural Health Study (AHS) a prospective cohort study of roughly 54,000 pesticide applicators in Iowa and North Carolina. At enrollment, participants were asked about their lifetime use of 50 pesticides, including the number of years and days per week each pesticide was used. From this information and from additional information collected at follow-up, the researchers were able to compute three measures of cumulative lifetime exposure to specific pesticides.

The cohort has been followed for over 20 years. The large size of the cohort and the detailed information on pesticide use have made possible informative studies of exposure to specific pesticides and various health outcomes, but mainly cancers.

The example of the Agricultural Health Study helps us to understand why the results from the Honolulu Health Study are so inconsistent and uninformative. How do the authors deal with the serious limitations of their data and their analysis that I’ve pointed out above? They only briefly addressed these limitations of the study. For example, they acknowledged the lack of information on pesticides and the small number of subjects in the “moderate” exposure group.

But they then proceeded to put the best possible gloss on their woefully limited exposure data and their inconsistent results, implying that the latter provide evidence that pesticide exposure increases the risk of cardiovascular disease. They skirt past the issues surrounding the quality of the data and the analysis to make a causal interpretation, knowing that the words “pesticides” and “cardiovascular disease” will attract the interest of some journal eager to increase its circulation.

This paper, while not important in itself, is a symptom of a troubling situation in epidemiology and public health research. The paper underwent peer review and was published in what looks like a reputable journal.* But it is clear that the peer reviewers either did not know how to evaluate an epidemiologic study or could not be bothered to do a serious job.

Epidemiologists, statisticians, and other health researchers need to publish in order to advance in their careers. But, the public and journalists – the consumers of information about health – need to be aware of something that researchers know well – there is no paper that is so dreadful that it cannot be published somewhere.

*The Journal of the American Heart Association has a “respectable” impact factor (5.1); however, this is far below the two leading journals: Circulation (23.1) and the Journal of the American College of Cardiology (16.8).

Geoffrey Kabat is an epidemiologist, the author of over 150 peer-reviewed scientific papers, and, most recently, of the book Getting Risk Right: Understanding the Science of Elusive Health Risks. Follow him on Twitter @GeoKabat. This article is reprinted from Genetic Literacy Project, a 501(c)(3) nonprofit whose mission is to educate consumers and encourage cooperation among academic and industry researchers to promote the public interest. Read the original here.

Comments