Real-time brain scans coupled with a machine-learning algorithm can reveal whether a person has memory of a particular subject, but with a little bit of concentration people can easily hide their memories from the computer.

Memory is obviously important, in areas like eyewitness testimony, medicine and even marketing. Programs that can read a person's brain scan data and surmise whether that person is experiencing a memory could be important for those reasons.

But with just a little bit of coaching and concentration, subjects are easily able to obscure real memories, or even create fibs that look like real memories, on brain scans. For cooperative subjects, things are good, but for high-stakes situations knowing they can be spoofed is important.

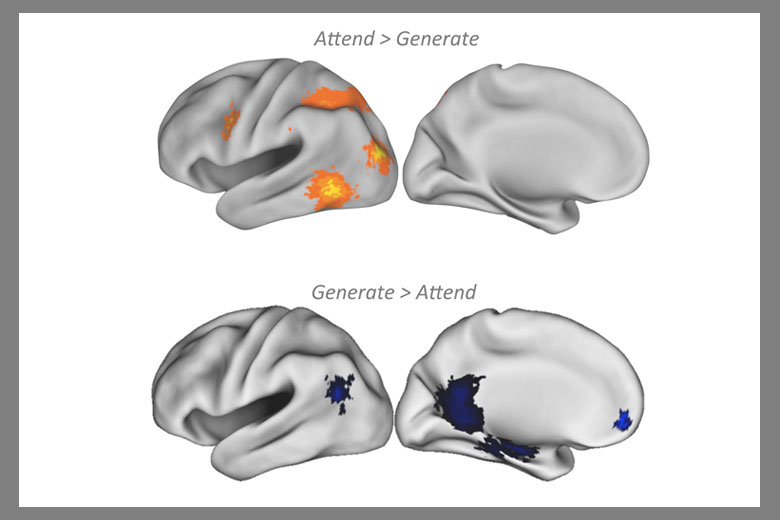

Top images show brain activity as participant pretends to see a familiar face for the first time; bottom images show brain activity when the participant is pretending to recognize a novel face. Both instances fooled the memory decoding algorithm into thinking the subject was telling the truth. Credit: Stanford

Although the process of creating, storing, recalling and replaying a memory is complex, the fact that different parts of the brain play distinct roles in memory allows neuroscientists to pair brain activity signals with machine learning pattern analyses to detect complex patterns that signal whether a person is remembering a target stimulus or perceiving it as novel.

Anthony Wagner, a professor of psychology and neuroscience at Stanford, and study lead author Melina Uncapher recruited 24 subjects to participate in a two-day test. On the first day, the subjects had their brains scanned in an fMRI machine while seeing photos of 200 novel faces, with instructions to try to remember the faces. They were given two seconds to look at each face, and then eight more seconds to create a "story" about that person – a trick that would possibly help them remember the face. After a short break, the faces were shuffled and the subjects repeated the training. The algorithm was 67 percent accurate in detecting the presence or absence of memories when subjects were cooperative or truthful.

On the second day, the subjects returned to the fMRI machine, this time to see 400 faces – an additional 200 novel faces were mixed in with the 200 faces they had seen the previous day. For the first half of the new, combined batch of photos, the subjects were instructed to answer truthfully, "Do you remember seeing this face?" This allowed the algorithm to set a baseline of brain activity for when each subject answered truthfully.

For the remaining half of the study, the subjects were told to try to hide their memory states. If they thought they had seen the face before, they were to say it was new; if it was a novel face, they were to report they had seen it the previous day.

"We gave them two very specific strategies: If you remember seeing the face before, conceal your memory of that face by specifically focusing on features of the photo that you hadn't noticed before, such as the lighting or the contours of the face, anything that's novel to distract you from attending to the memory," Uncapher said. "Likewise, if you see a brand-new face, think of a memory or a person that this face reminds you of and try to generate as much rich detail about that face as you can, which will make your brain look like it's in a state of remembering."

With just two minutes of coaching and training, the subjects became proficient at fooling the algorithm: The accuracy of the decoder fell to 50 percent, or no better than a coin-flip decision.

The subjects reported that it was easier to make up new memories about novel faces than it was to bury real memories from the previous day. The brain scans reflected this shift. When subjects looked at a novel face and pretended they had seen it before, activity spiked in parts of the brain responsible for recalling memories. A similar flip in brain activity was recorded when subjects tried to suppress the memory of a face seen previously.

Comments