Of course I love each and every one of my faithful readers like good teachers love their pupils, but among the varied crowd, the readers which I am most happy to host here are students and amateurs, because they provide me with true motivation for spending my time writing popularization articles. Without them, many of my posts would lose their meaning.

Occasionally, my travel brings me to meet -serendipitously or not- some of my readers. Such was the case a few weeks ago, when at a seminar I was giving for a LHCb working group I had the pleasure to chat with a Ph.D. student, F., who let me know she is a regular reader of this site. On that occasion, however, something less common happened. F. explicitly asked me (she said "may I request") to write a specific article!

I felt obliged, and am now doing my best to comply with the request (with a longish delay). The topic is the non-trivial one of Likelihood fits - fits to the data using the method of Maximum Likelihood.

Now, before you get to read the following text, let me be clear about one point: I am not a real, certified expert on the topic (but look out, I might become one). I have used likelihoods several times for my analyses of particle physics reactions, yet my knowledge of the topic is by and large based on practice, the reading of some but not all the basic textbooks, and -maybe my best asset- quite a few years of exposure to how data analyses are performed in High-Energy Physics experiments.

All in all, I would say I cannot be trusted too much on the technical details, although I have developed a good instinct. I thus think I can make an attempt at providing some useful inputs for whomever is wondering what the hell is the business with these functions. Actually, in fact, the fact I am not a statistician will probably ease the job of providing you with an explanation which, although maybe not rigorous, is simpler to digest.

In fact, I want to try and explain the basic concepts by using examples as simple as possible: learning by examples is easy and effective. Whether this post will do any good to the person who originally requested it, however, is another matter -I think the explanation and the example I offer below are a bit too simple to be of any use to her, but hopefully a second installation on this topic will be more on target.

So, what is the maximum likelihood method ?

Likelihood fits are a very powerful tool in physics data analysis; their use allows to determine the most likely value of one or more unknown parameters by combining information coming from multiple sources.

Likelihood fits are a very powerful tool in physics data analysis; their use allows to determine the most likely value of one or more unknown parameters by combining information coming from multiple sources.In general, a likelihood is a mathematical expression which is the product of probability density functions P() of one or a few random variables x and one or a few unknown parameters t (in general, a vector), P(x,t).

The form of the function is supposedly known: it describes the probability that the variables assume specific values within their allowed range. Given a set of repeated measurements of the variable x (let us denote them by x_i), one defines L as the product of the values of P(x_i,t): L, being the product of probability density functions, is a function of the unknown parameter(s) t. In mathematical notation, one simply writes

The best estimate of the unknown parameter t, given the observed set of data x_i, is then given by solving the equation

Usually rather than explicitly solving the above equation, the likelihood gets first converted into its logarithm (using the convenient property of the logarithm, of being a monotonous function of its argument). The logarithm of a product is a sum, so we just end up with having to maximize log(L) -or better, minimize its opposite -log(L) - by direct calculus or, in most instances, by means of a standard fitting program. The best estimates of the unknown parameters are the values which maximizes the likelihood.

The above summary may sound mysterious, but likelihoods are instead quite simple things -even in the most complex cases, they can be constructed by combining blocks together. These blocks are not plastic Lego blocks but functions, yet the concept helps looking at some of the nastiest likelihoods with more nonchalance.

A simple example

Before we discuss a nasty problem, however, let me make a simple example. If you throw a die, you know there are six possible results of its landing on a flat surface: one of the six integers painted on the six sides will come up on top. If you have no prior knowledge that the die is loaded, the "probability density function" (PDF) of the outcomes is nothing but a uniform distribution taking on the six possible values. Since the outcome of the "experiment" (the throwing of the die) is constrained to those six values, it follows that the probability density function has the value 1/6=0.167 for all outcomes.

Before we discuss a nasty problem, however, let me make a simple example. If you throw a die, you know there are six possible results of its landing on a flat surface: one of the six integers painted on the six sides will come up on top. If you have no prior knowledge that the die is loaded, the "probability density function" (PDF) of the outcomes is nothing but a uniform distribution taking on the six possible values. Since the outcome of the "experiment" (the throwing of the die) is constrained to those six values, it follows that the probability density function has the value 1/6=0.167 for all outcomes.It is thus "normalized" in a way that fulfils a simple law of probability: if we said that the experiment consisted in throwing the die and reading the value shown by the top side, then we know that a value was read with probability one, so the sum of the disjunct probabilities of occurrence of all possible readings must also be one. Such is the case for 6 times 1/6, in fact.

Not all probability density functions are flat: if I load the die with lead paint or some other trick on the side showing the number "1", which is usually opposite to the side showing a 6, the PDF of the "die throwing" experiment changes, and is not uniform any more: P(6) will now increase to anything from 1/6 (no detectable effect of the lead) to almost 1.0 (a very heavy load, which forces the die to always land on the side showing the number 1). Consequently, since the sum of P(1)+P(2)+P(3)+P(4)+P(5)+P(6) must still be 1.0, the other five probabilities P(1)...P(5) must decrease from 1/6 to smaller values; the one which will see the largest decrease is likely to be P(1), but let us discuss statistics and not dynamics here.

Let us then consider the experiment of determining whether a die is loaded such that number 6 appears more often than all others. We might want to test a PDF expressed by the simple form:

The above is a rather crude model, but it is sufficient for our purpose here. It describes a decrease of the probability to observe the value "1" from the nominal probability, a smaller decrease to observe the values "2" through "5", and an increase of the probability of observing "6". The increase in the probability of "6" is our single unknown parameter t. Let us use as base data the following set of numbers, relative to 20 die-throwings -already ordered by outcome:

The likelihood construction works as follows:

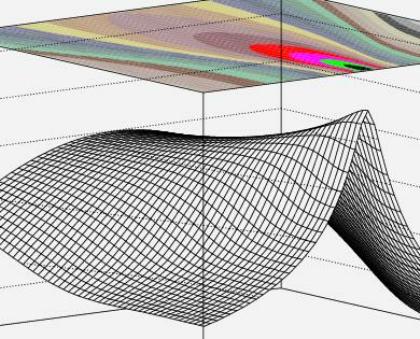

As you see, this is a function of our single unknown parameter t. It is displayed in the figure on the right (where the horizontal axis goes from -0.166 to 0.333, the range of validity of the function). A maximization of -log(L) leads to extracting the derivative:

As you see, this is a function of our single unknown parameter t. It is displayed in the figure on the right (where the horizontal axis goes from -0.166 to 0.333, the range of validity of the function). A maximization of -log(L) leads to extracting the derivative: which has only one solution in the allowed range: t=0.071687. We thus find a solution consistent with the existence of a load, causing the number "6" to have a probability enhanced by 7.2%, i.e. from 16.7% to 23.9%. This solves our problem nicely, however one question immediately arises:is this a significant observation ? In other words, if you threw a unknown die twenty times and obtained the distribution shown above, would you conclude that the die is loaded ?

Of course not. This is provided by extracting the second derivative of the likelihood function, whose inverse yields an estimate of the variance. In our case the square root of the variance -the standard deviation of our result- turns out to be 0.084: the measurement of the load is utterly inconclusive, yielding t=0.072+-0.084: this is compatible with no load at all, well within one standard deviation. Of course, it was to be expected, since twenty throws of a die cannot give a definite answer in most cases.

If you are stubborn, you can try throwing the die 1980 more times. Suppose you get the same distribution as before:

Is this significant ? Of course, the same procedure outlined above provides the minimum at 0.072 we found earlier -this follows from observing that we, nor the maximum, do not care about the value of the likelihood, but its derivative; however, this time the square root of the variance is 0.0084, and a load is thus established: the result now clearly points to an increase of the probability of getting a "6" by 7.17+-0.84% -a number significantly different from zero.

However, the increased precision of this latter test evidences a problem: our model appears to predict that the occurrence of the value "1" is smallest, but this is not borne out by the data. In other words, while we have obtained a well-behaved maximum for our likelihood, we must question whether the fit to our model is a good one. If the model were wrong, our result (the establishment of a load) would then be unreliable.

This is where the maximum likelihood method leaves us on our own. While, in fact, there exist methods to verify the goodness-of-fit using the result of the likelihood maximization, they are artificial and "external" to the method, since they are not directly related to the value of likelihood at maximum or other statistical figures of merit.

In our simple example, we can resort to computing the global

for four degrees of freedom (six independent counts minus one parameter minus one). This is largish, but not too bad. That is to say, we do not get a notitia criminis about our model for the load of the die being inappropriate to describe the data, and on the other hand we note that our model does include the "no load" case (when t=0). So we are bound to deduce that the die is indeed loaded, and we accept the estimate provided by the likelihood maximization, pending a more detailed study of possible influences of a load on the probability of the six values.

Above we have used the maximum likelihood method to compute the solution of a very simple problem, one for which the answer was trivial to obtain. In the next installment of this post, I will try to give a more state-of-the-art example of use of the method of maximum likelihood. In the meantime, you might ponder on the fact that everything you strictly need to know in order to use the method can be extracted from what is exemplified above.

Comments