So it is only normal for me to try and go against that particular cliché here, and talk about things I will publish in the future. Admittedly, it is a bit of a mine field (it is never easy to be an anticonformist), but I will try to avoid stepping on the most obvious triggers (violations of confidentiality, scooping risks, impossible promises).

1. A new tool for anomaly detection

One research article I can confidently promise will be published soon will be titled "Anomaly Detection in the Copula Space". The algorithm I developed is designed around the needs of LHC research, and specifically the problem of being sensitive to new physics signals that theorists have never thought of. It is called "RanBox", and it is based on the idea that if you standardize your data such that they fit in a unit hypercube in N dimensions (you can always do that, by virtue of a theorem first demonstrated by Sklar in 1959) then the search for overdense regions can be cast in the guise of multiple hypothesis testing of a Binomial ratio (I see K events in a N-dimensional interval surrounding a search box, the volumes ratio is tau, so I expect that in the box I will find tau*K events; if I find more, then it may be a fluke or the contribution of an extra process).

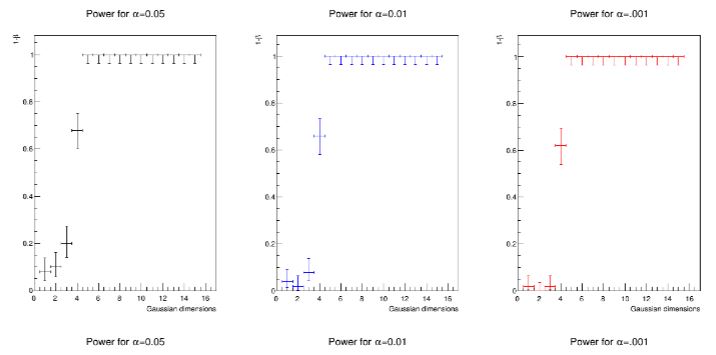

The idea of RanBox is quite simple, and yet the performances of the algorithm are surprisingly high. E.g., see what happens in a standardized problem, where a small signal exhibiting multivariate Gaussian distribution in some of the features is hiding amidst a flat background in a 20-dimensional space, in a total data sample of 5000 events: RanBox can confidently locate it even if the fraction of signal is less than 1%, and even if the number of Gaussian features is just five or six.

Above, the "power" of the search (the fraction of times that a true signal is correctly identified, when one allows oneself to wrongly claim a signal with 5%, 1%, or 0.1% probability, left to right) is studied as a function of the number of distinguishable features N_g of the signal, which only amounts to 50 events in a 5000 event sample. The power is larger than 50% for N_g down to 4, if the type-1 error rate alpha is 0.1%.

I already blogged about RanBox two years ago, but now I am about to publish the article that describes it in detail, along with Martina Fumanelli, Chiara Maccani, Marija Mojsovska, Bruno Scarpa and Giles Strong. One thing I am quite proud of is that it will be the first multi-author paper I publish which has 50% female authors (three undergraduate students who did studies of the algorithm under my supervision).

2 - Convolutional Neural Networks for muon energy regression

Moving on, another article that is in drafting stage - results having already been produced - concerns a study of the measurability of very energetic muons in a calorimeter. Muons lose very little energy in dense materials, so we rely on the curvature they withstand when they travel in a strong magnetic field. For energies above 1 TeV, however, the curvature is so small that the measurement becomes very imprecise.

We (Jan Kieseler, Giles Strong, Lukas Layer and myself, now with undergraduate student Filippo Chiandotto also helping) have already demonstrated, in a preprint we published last August, that one can exploit the subtle pattern of photon radiation that muons emit when they traverse the strong electric field of atoms; now we are going to show how convolutional neural networks can effectively recover the resolution for multi-TeV muons, keeping them in the menu of useful probes of new physics in searches at future colliders.

3 - A Learning Nearest-Neighbour Algorithm

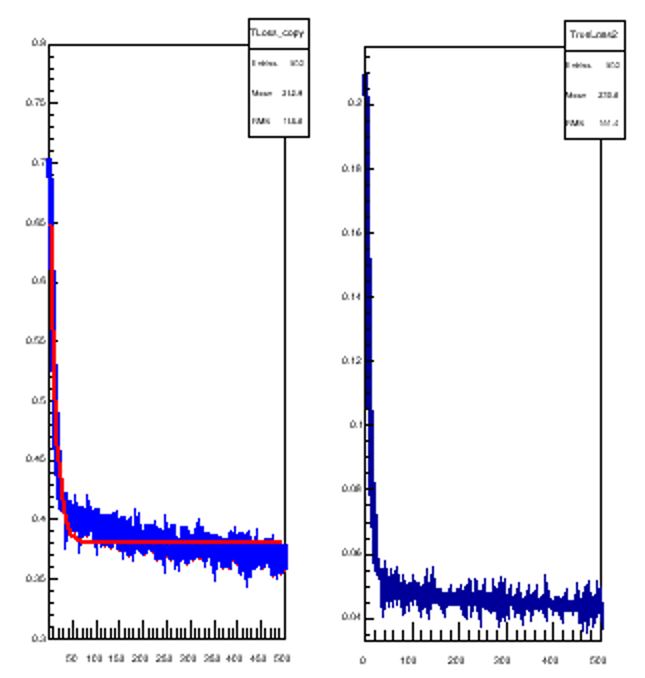

Along with the same team above I am also writing a paper on a very powerful version of the so-called "k-Nearest Neighbour" algorithm. The kNN algorithm is a very useful tool for classification and regression, but also just for clustering, in multi-dimensional spaces. The novelty here is to massively overparametrize it, such that it effectively becomes a tool you can train as you train a neural network. I will not disclose the details here, but the article will show the performance of the algorithm on the same task of the article on muon energy regression I mentioned above. Perhaps only a teaser: below you can see the loss function of the algorithm as it learns how to best regress muon energy. The loss is composed by two parts (the two panels in the graph below, which the algorithm learns to minimize together.

4 - A White Paper on Differentiable Programming for Experimental Design Optimization

This is an article we are drafting within the MODE collaboration, which I coordinate since last year. It will describe the state of the art in differentiable programming and applications to fundamental physics research, and then discuss the way we want to use those techniques for the end-to-end optimization of the design of experiments that base their functioning on the interaction of radiation with matter - a loose definition that includes particle collider detectors, hadrotheraphy facilities, muon tomography apparata, etcetera. I expect that writing this article will keep us busy until the end of the summer, or even a bit further, but it will be a very important work on which we will base our future studies.

5 - Optimized Detectors for Muon Tomography

Finally, together with a few colleagues within the MODE collaboration we are developing software for the end-to-end optimization of muon imaging apparatuses, using differentiable programming in PyTorch. I can't tell you more about this, but I promise it will be an interesting paper!

What else ?

Apart from mentioning that I sent for publication with the AMVA4NewPhysics network members a review article on multivariate analysis methods less than a month ago (you can read a summary of the results in this post), I am also bound to include here a mention of the many articles that the CMS Collaboration is publishing, at a pace of about 100 per year. I cannot claim that I contribute to all those papers, of course, but I am involved in several different ways in the screening, the review, and the advising of analysts who produce the results we publish. But in addition, with a time scale of probably one more year, I foresee a publication in the area of B physics which will have more than a standard minimal contribution from me and my group (notably Hevjin Yarar), and another publication using CMS Open Data for the application of the INFERNO algorithm to a top quark cross section measurement (stemming from work of Lukas Layer). More on that, however, in a different post.

In summary, while I could leave a comfortable life by exploiting my membership of CMS, which guarantees a very large throughput of scientific publications (although these have typically of the order of 3000 authors), I am extremely busy this year with a number of exciting new projects! The only problem with this is that I can only leave very little time to my usual pastimes... But I am reasoning that I am already 55 years old, and if I can still write code and produce valuable research I have to exploit the chance to do so now, as in a few years I will be good for nothing, if still alive!

---

Tommaso Dorigo (see his personal web page here) is an experimental particle physicist who works for the INFN and the University of Padova, and collaborates with the CMS experiment at the CERN LHC. He coordinates the MODE Collaboration, a group of physicists and computer scientists from eight institutions in Europe and the US who aim to enable end-to-end optimization of detector design with differentiable programming. Dorigo is an editor of the journals Reviews in Physics and Physics Open. In 2016 Dorigo published the book "Anomaly! Collider Physics and the Quest for New Phenomena at Fermilab", an insider view of the sociology of big particle physics experiments. You can get a copy of the book on Amazon, or contact him to get a free pdf copy if you have limited financial means.

Comments