Locality theory said there is a limit to how correlated two particles can be. Bell devised a mathematical formula for locality, and presented scenarios that violated this formula, instead following predictions of quantum mechanics. Since then, physicists have tested Bell’s theorem by measuring the properties of entangled quantum particles in the laboratory. Essentially all of these experiments have shown that such particles are correlated more strongly than would be expected under the laws of classical physics — findings that support quantum mechanics.

But there were several major loopholes in Bell’s theorem. While the outcomes of experiments may appear to support the predictions of quantum mechanics, they may actually reflect unknown “hidden variables” that give the illusion of a quantum outcome, but can still be explained in classical terms.

Since then, two major loopholes have since been closed but a third remains; physicists refer to it as “setting independence,” or more provocatively, “free will.”

This loophole proposes that a particle detector’s settings may “conspire” with events in the shared causal past of the detectors themselves to determine which properties of the particle to measure — a scenario that, however far-fetched, implies that a physicist running the experiment does not have complete free will in choosing each detector’s setting.

Such a scenario would result in biased measurements, suggesting that two particles are correlated more than they actually are, and giving more weight to quantum mechanics than classical physics.

In a recent paper, researchers propose an experiment that may close the last major loophole of Bell’s inequality — if the 50-year-old theorem is violated by experiments it would mean that our universe is based not on the textbook laws of classical physics, but on the less-tangible probabilities of quantum mechanics.

Such a quantum view would allow for seemingly counterintuitive phenomena such as entanglement, in which the measurement of one particle instantly affects another, even if those entangled particles are at opposite ends of the universe. Among other things, entanglement — a quantum feature Albert Einstein skeptically referred to as “spooky action at a distance”— seems to suggest that entangled particles can affect each other instantly, faster than the speed of light.

Yes, you read that right. Faster than the speed of light. And not just mathematical sleight of hand either.

“It sounds creepy, but people realized that’s a logical possibility that hasn’t been closed yet,” says MIT’s David Kaiser, the Germeshausen Professor of the History of Science and senior lecturer in the Department of Physics. “Before we make the leap to say the equations of quantum theory tell us the world is inescapably crazy and bizarre, have we closed every conceivable logical loophole, even if they may not seem plausible in the world we know today?”

Now Kaiser, along with MIT postdoc Andrew Friedman and Jason Gallicchio of the University of Chicago, have proposed an experiment to close this third loophole by determining a particle detector’s settings using some of the oldest light in the universe: distant quasars, or galactic nuclei, which formed billions of years ago.

Artistic rendering of ULAS J1120+0641, a very distant quasar. Image: ESO/M. Kornmesser

The idea, essentially, is that if two quasars on opposite sides of the sky are sufficiently distant from each other, they would have been out of causal contact since the Big Bang some 14 billion years ago, with no possible means of any third party communicating with both of them since the beginning of the universe — an ideal scenario for determining each particle detector’s settings.

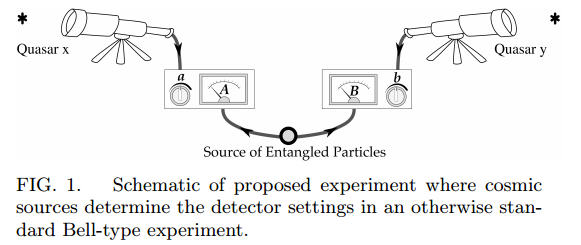

As Kaiser explains it, an experiment would go something like this: A laboratory setup would consist of a particle generator, such as a radioactive atom that spits out pairs of entangled particles. One detector measures a property of particle A, while another detector does the same for particle B. A split second after the particles are generated, but just before the detectors are set, scientists would use telescopic observations of distant quasars to determine which properties each detector will measure of a respective particle. In other words, quasar A determines the settings to detect particle A, and quasar B sets the detector for particle B.

The researchers reason that since each detector’s setting is determined by sources that have had no communication or shared history since the beginning of the universe, it would be virtually impossible for these detectors to “conspire” with anything in their shared past to give a biased measurement; the experimental setup could therefore close the “free will” loophole. If, after multiple measurements with this experimental setup, scientists found that the measurements of the particles were correlated more than predicted by the laws of classical physics, Kaiser says, then the universe as we see it must be based instead on quantum mechanics.

Credit: arXiv:1310.3288

“I think it’s fair to say this [loophole] is the final frontier, logically speaking, that stands between this enormously impressive accumulated experimental evidence and the interpretation of that evidence saying the world is governed by quantum mechanics,” Kaiser says.

Now that the researchers have put forth an experimental approach, they hope that others will perform actual experiments, using observations of distant quasars.

“At first, we didn’t know if our setup would require constellations of futuristic space satellites, or 1,000-meter telescopes on the dark side of the moon,” Friedman says. “So we were naturally delighted when we discovered, much to our surprise, that our experiment was both feasible in the real world with present technology, and interesting enough to our experimentalist collaborators who actually want to make it happen in the next few years.”

Adds Kaiser, “We’ve said, ‘Let’s go for broke — let’s use the history of the cosmos since the Big Bang, darn it.’ And it is very exciting that it’s actually feasible.”

Preprint: Jason Gallicchio, Andrew S. Friedman, David I. Kaiser, 'Testing Bell's Inequality with Cosmic Photons: Closing the Settings-Independence Loophole', arXiv:1310.3288. Source: Jennifer Chu at MIT

Comments