Sensory Substitution Devices (SSDs) use auditory or tactile stimulation to provide representations of visual information and can help the blind "see" colors and shapes.

Users recognize the image without seeing it because the information is transformed into audio or touch signals. But few people in the blind community actually use them because they are cumbersome and unpleasant to use.

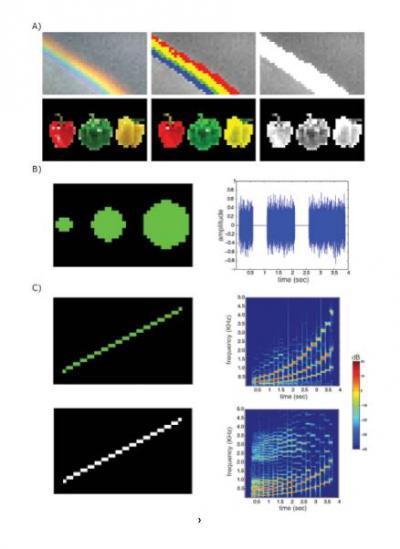

That may change with EyeMusic, which transmits shape and color information through a composition of musical tones - "soundscapes." EyeMusic was developed by senior investigator Prof. Amir Amedi, PhD, and colleagues at the Hebrew University. It scans an image and uses musical pitch to represent the location of pixels. The higher the pixel on a vertical plane, the higher the pitch of the musical note associated with it. Timing is used to indicate horizontal pixel location. Notes played closer to the opening cue represent the left side of the image, while notes played later in the sequence represent the right side.

Additionally, color information, which most SSDs cannot utilize, is conveyed by the use of different musical instruments to create the sounds: white (vocals), blue (trumpet), red (reggae organ), green (synthesized reed), yellow (violin); black is represented by silence.

Using the EyeMusic SSD, both blind and blindfolded sighted participants were able to correctly identify a variety of basic shapes and colors after as little as 2-3 hours of training.

Top: The EyeMusic's constructed audio file preserves both spatial information and image colors. The Sensory Substitution Device resizes the image to X=40 pixels (columns) by Y=24 pixels (rows) and runs a color clustering algorithm to get a 6-color image. Middle: The 2D image's X-axis is mapped to the time domain, i.e., pixels situation on the left side of the image with sound before the ones situation on its right side, illustrated here in waveform. Bottom: The Y-axis is mapped to the frequency domain, i.e., pixels situation on the upper side of the image will sound higher in frequency while those on its lower side will sound lower in frequency, illustrated here in spectrogram representation. Credit: Restorative Neurology and Neuroscience (Abboud et al.)

"This study is a demonstration of abilities showing that it is possible to encode the basic building blocks of shape using the EyeMusic," explains Amedi. "Furthermore, the success in associating color to musical timbre holds promise for facilitating the representation of more complex shapes."

In addition to successfully identifying shapes and colors, users in the new EyeMusic study indicated they found the SSD's soundscapes to be relatively pleasant and potentially tolerable for prolonged use. "In soundscapes generated from images," notes Amedi, "there is a tendency for adjacent frequencies to be played together. Using a semitone western scale would then generate sounds that are perceived as highly dissonant. Therefore, to generate more pleasant soundscapes, we used the pentatonic musical scale that generates less dissonance when adjacent notes are played together."

While this new study shows that the EyeMusic can enable the visually impaired to extract visual shape and color information using auditory soundscapes of objects, researchers feel that this device also holds great promise for the field of visual rehabilitation in general. By providing additional color information, the EyeMusic can help facilitate object recognition and scene segmentation, while the pleasant soundscapes offer the potential of prolonged use.

"There is evidence suggesting that the brain is organized as a task-machine and not as a sensory machine. This strengthens the view that SSDs can be useful for visual rehabilitation, and therefore we suggest that the time may be ripe for turning part of the SSD spotlight back on practical visual rehabilitation," Prof. Amedi adds. "In the future, it would be intriguing to test whether the use of naturalistic sounds, like music and human voice, can facilitate learning and brain processing relying on the developed neural networks for music and human voice processing."

Additionally, the researchers hope the EyeMusic can become a tool for future neuroscience research. "It would be intriguing to explore the plastic changes associated with learning to decode color information for auditory timbre in the congenitally blind, who never experience color in their life. The utilization of the EyeMusic and its added color information in the field of neuroscience could facilitate exploring several questions in the blind with the potential to expand our understanding of brain organization in general," concludes Prof. Amedi.

Comments