First of all, machine learning. This is a booming field of research, which employs computer programs capable of learning from examples, such that they can then apply the learned structures to a number of different tasks of big relevance in today's society and to advance human knowledge.

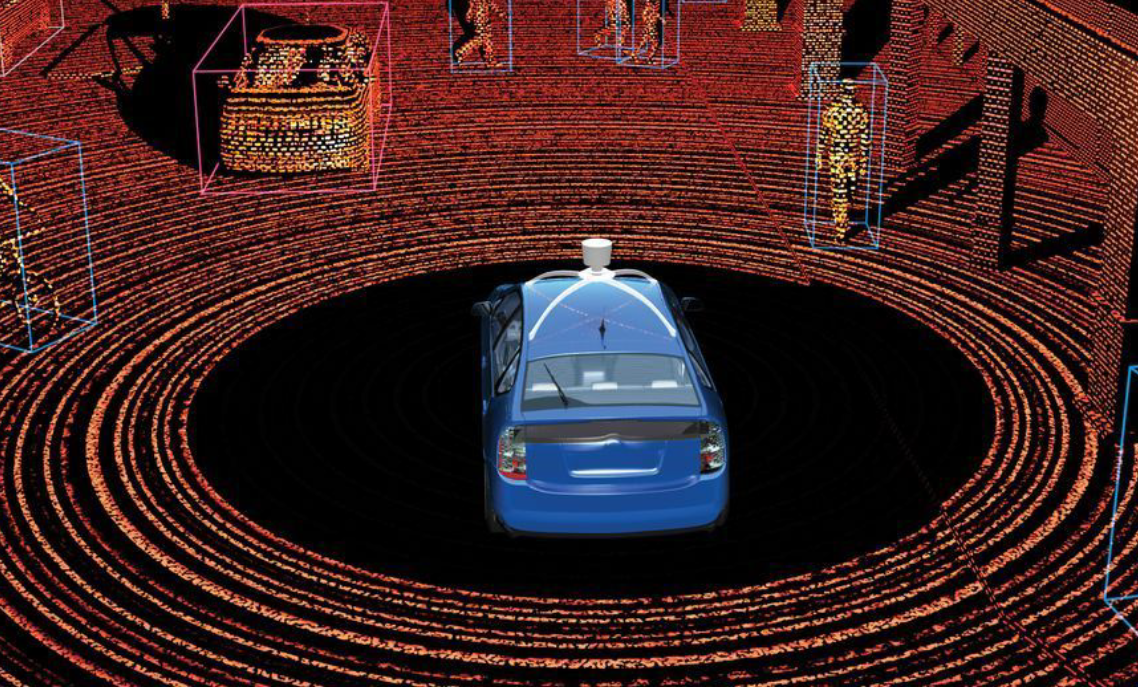

To make a simple example, an important goal in the making nowadays is how to teach vehicles to drive themselves. Vehicles can be equipped with sensors (videocameras, radar, etcetera) that provide a continuous stream of data about the surroundings, the conditions of the vehicle itself, and the environment in general. How to compress that multi-dimensional information into effective summaries that allow the vehicle's software to take decisions on the wheel and pedals? Machine learning methods use large amounts of training data to teach themselves how to e.g. distinguish danger situations from accidental false alarms.

(Above: a visualization of how the software in a self-driving vehicle "sees" the environment; taken from Jesse Thaler's talk at today's session)

If a vehicle is driving itself and a person starts crossing the road ahead, the sensory inputs can detect the person as a stream of image pixels that move along the field of view. A classification algorithm should then detect whether the inputs are likely to come from a real person or rather from a bird crossing the field of view of the camera.

A different thing we need to ask our software algorithms to do, in order to let them properly drive a vehicle, is called regression. The vehicle may need to determine the exact amount of rotation that needs to be imparted to the wheel, as a function of time, such that the vehicle can stay on the desired curved path on a winding road; this requires using a number of inputs about the speed of the vehicle itself, the conditions of the road, the weight of the passengers, etcetera. In this case you are trying to find the best estimate of a parameter as a complex function of inputs, but you do not know the function - only previous examples of trajectories!

Classification and regression are but the simplest applications of machine learning algorithms. Nowadays we are asking more and more complex operatiosn to machines. Understanding written text, extracting information from it, is one example, where deep neural networks can be successfully deployed; teaching a robot to walk is another, where reinforcement learning techniques are usually the right tool. The recognition of faces from pictures or video imagery is a task to which convolutional neural networks have been applied effectively.

As we see, there are a number of attractive applications of machine learning algorithms, wherever we look. So of course, the study of particle physics is another one. Our experiments at the LHC produce huge flows of complex, multi-dimensional data, and we struggle to make sense of them. Reconstructing the identity of a subnuclear collision from the millions of electronic signals we record in our detectors is a task to which machine learning techniques have been applied with increasing success over the past ten years (but I will say here that I started doing this in 1992).

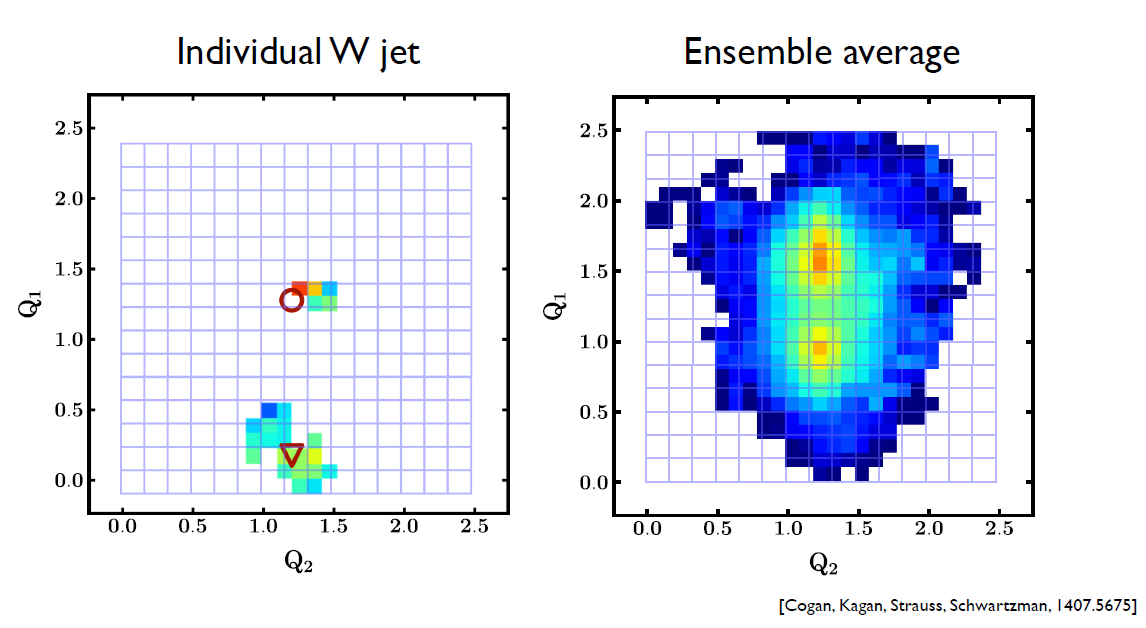

Nowadays, one important topic is to reconstruct jets of particles produced when we collide protons in the ATLAS and CMS detectors with the LHC. What are jets? A jet is the product of the fragmentation process taking place when a quark or a gluon is kicked off a proton with large energy, or when a heavy particle such as the Higgs boson decays into a quark-antiquark pair.

What we see in the detector is a stream of particles and we may be content with measuring their collective properties - the total jet energy, for instance, is an observable quantity that carries information on the energy of the originating quark or gluon. But if we want to know more, such as which quark was it that produced the jet (was it a light quark, or a bottom quark, or rather was it a gluon?), then we have to use as much information as possible, looking at all the details of each and every produced particle, their measured characteristics and identity. It is a wondrous task, and deep neural networks are showing their worth in it.

(Above, how the jet energy deposits in a calorimeter can be interpreted in terms of forming an "image", whose details can be exploited - in this case, to identify the decay of a heavy W boson from the "sub-jet" components it produced. Again this pic is from Jesse Thaler's talk).

So that, in a nutshell, is what the workshop is going to be about: ways to use the most advanced machine learning tools to extract information from our detector outputs. I hope I will be able to write some more detail of the various developments in forthcoming posts here tomorrow or the day after. Stay tuned for more if you are interested!

---

Tommaso Dorigo is an experimental particle physicist who works for the INFN at the University of Padova, and collaborates with the CMS experiment at the CERN LHC. He coordinates the European network AMVA4NewPhysics as well as research in accelerator-based physics for INFN-Padova, and is an editor of the journal Reviews in Physics. In 2016 Dorigo published the book “Anomaly! Collider physics and the quest for new phenomena at Fermilab”. You can get a copy of the book on Amazon.

Comments