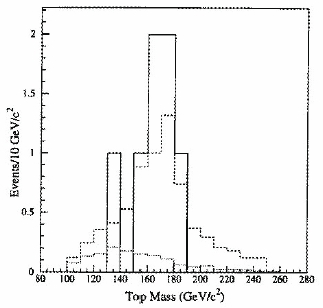

If it feels strange to you hearing that a physical property of a particle is measured directly before the particle is discovered, think harder: the meaning of "discovery" is a matter of conventions. In particle physics, a claim of discovery of a new particle can usually be issued only after a signal is found which reaches or exceeds a total significance of five standard deviations. CDF in 1994 found a signal incompatible with backgrounds by about three standard deviations, and proceeded to measure the mass of the quark in the hypothesis that those events were indeed top quark decays. So CDF can boast about a nice mass measurement which pre-dates the top discovery, which happened one year afterwards, and was shared by the CDF and DZERO collaborations.

If it feels strange to you hearing that a physical property of a particle is measured directly before the particle is discovered, think harder: the meaning of "discovery" is a matter of conventions. In particle physics, a claim of discovery of a new particle can usually be issued only after a signal is found which reaches or exceeds a total significance of five standard deviations. CDF in 1994 found a signal incompatible with backgrounds by about three standard deviations, and proceeded to measure the mass of the quark in the hypothesis that those events were indeed top quark decays. So CDF can boast about a nice mass measurement which pre-dates the top discovery, which happened one year afterwards, and was shared by the CDF and DZERO collaborations.Anyway, all the above is history now. What matters to me when I hear about new results on the top quark is that this intriguingly heavy "elementary particle" is an extremely well-known object by now, and this in turn opens avenues of research of its properties, and further discoveries.

But Why Bother ?

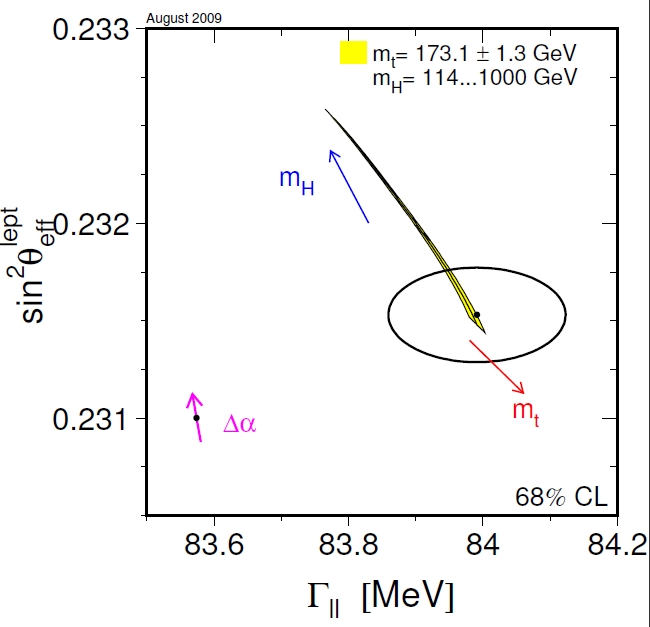

You might argue that the top mass is just a number, and one already too well known by now, in comparison with other parameters of the standard model. This is indeed true: for instance, if one were to point his or her finger at the quantities that still drive our uncertainty in the mass of the Higgs boson (which can indeed be computed from the standard model parameters, although with low precision at present, due to its very loose dependence on the former), one would have to settle on the W boson mass and the Weinberg angle, rather than on the top quark mass. This much can be gathered by observing with attention the graph below.

In the figure, you see by how much the Higgs mass varies upon changing the top quark mass within the limits allowed by last August's world average. Let me explain. The black ellipse is a measurement of two important standard model parameters, the square of the sine of the theta angle on the y-axis, and the leptonic width of the Z boson on the x-axis. Let us forget about those for a moment.

The yellow band shows how sin(theta) and Gamma(ll) vary according to the standard model if we modify the Higgs mass from 114 to 1000 GeV. That is to say, if the Higgs boson weighed one TeV, the experimental measurement of x and y (the point at the center of the black ellipse) would be far off the real value of the two parameters, which would instead be at the uppermost corner of the yellow band.

For a 114 GeV Higgs things are much more in agreement with the black ellipse, since now the true value of the parameters stays at the bottom of the band. But what does the top quark mass have to do with all this ? Well, if you changed the top mass from 173.1 GeV (the world average value) to a different value, then the above considerations would slightly get modified: the true value of sin(theta) and Gamma(ll) would move left or right along the yellow band, in the direction highlighted by the red arrow pointing toward the lower right. The yellow band spans one-standard-deviation variations in the top mass, 1.3 GeV. There is not much freedom left there!

In other words, the top quark does not have a large impact in the determination of the two parameters plotted in the figure, or in the Higgs boson mass. Its value used to have a larger impact when the experimental uncertainty was five times larger, of course: but now, we need to determine with more precision other quantities, if we want to test the standard model more precisely and maybe obtain a better estimate of the possible value of the Higgs boson mass.

All that said, the top mass remains a critical parameter for model builders: on the precise value of the top quark mass depend several details of supersymmetric theories and, eventually, rates of events which might one day constitute a discovery at the Tevatron or at the LHC. But even in the absence of such justification, measuring the top quark mass with high precision has a great importance for LHC physics.

Why the LHC ? Because a sub-GeV precision on the top quark mass allows the CMS and ATLAS experiments to calibrate their jet-energy measurement with the large datasets of top quark pair decays they will start collecting... Next week!!!!

Beware: eventually, the mass of the top quark will be measured better at the LHC, thanks to the fact that the rate of top production there is going to be two orders of magnitude larger than at the Tevatron. Yet for a long while, the experiments will have a hard time calibrating the scale of their calorimetric jet energy measurements. Under such circumstances, top quarks are a perfect "calibration line": the Tevatron says what the top mass is, LHC measures it and finds it at a different mass vaue: in other words, measures an offset. They can then correct for the offset in any other measurement. The top mass measured at the LHC becomes temporarily useless by itself, but in exchange the LHC experiments acquire a much better precision in other searches and measurements.

The new measurement

After this long introduction, I will be rather quick in the description of the stellar new result by CDF. This is a measurement that fits together the top quark mass and the jet energy scale. The two quantities are connected to each other, of course -the top quark decays to jets, so to size up the former we need to measure the latter-, but since top quarks decay into hadronic jets through the intercession of a W bosons, the reconstruction of the W boson mass (known to better than per mille accuracy by now) from the two produced jets allows a inter-calibration of the jet energy scale. A global fit extracts both the top mass and the jet energy scale, to the benefit of both estimates!

[ A side note: if you are interested in understanding how one calibrates the jet energy measurement and what exactly is it that we call "jet energy scale", please have a look at a couple of pieces I wrote on that very topic a while ago here . ]

The analysis starts from a very streamlined selection of top-pair decay candidates: in a dataset corresponding to 4.8 inverse femtobarns of proton-antiproton collisions, collected by CDF from 2002 to 2009, events are selected to contain a energetic electron or muon (with transverse momenta above 20 GeV), four jets (again, with 20 GeV or more of transverse energy), and some evidence of neutrino escape (missing transverse energy above 20 GeV). These kinds of events constitute the typical "single lepton" signature of top-pair decay: one of the W bosons emitted by top decay produces a pair of jets, the other produces a lepton and a neutrino, and the two accompanying b-quarks yield two additional jets. Events are then required to contain at least one jet originated by a b-quark, using a reconstruction of the secondary vertex with the tracks contained in the jet.

A picture of the decay of the top-antitop pair will, I fear, be more clear than my text in explaining exactly how top decays look like in the single lepton final state. It is shown on the left.

A picture of the decay of the top-antitop pair will, I fear, be more clear than my text in explaining exactly how top decays look like in the single lepton final state. It is shown on the left.The fitting method using by this precise analysis, called "MTM" by the authors, is not new, and this result is not much more than an update of a former result which used 20% less data. However, the authors have managed to include in their selection of top-pair event candidates a set of events where the high-momentum muon is only loosely identified in the detector, without affecting significantly the background fraction. The total gain in statistics from the previous iteration of the analysis in the end amounts to about 50%.

The MTM method is a very well-oiled machinery by now. The configuration of the final state objects measured in the detector (leptons, jets, missing transverse energy from the escaping neutrino) is related to the hard subprocess that generated them by transfer functions that enshrine the detector resolution and all the other nuisances that degrade the measurement. An integration of the matrix element on a 19-dimensional phase space allows to fit for the most likely top quark mass and jet energy scale. Simple, huh ?

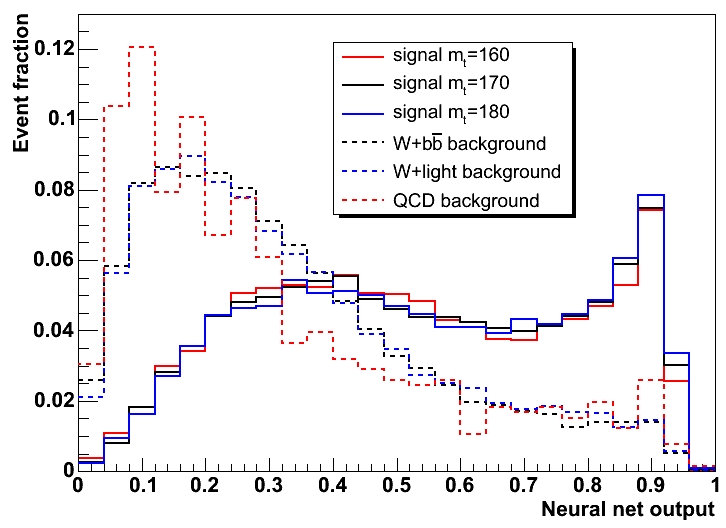

Actually, not so much. Before a fit can be performed, a neural network trained to distinguish top-pair production from the main background (W bosons produced in association with b-quark jets) is run to help select the dataset which will be fed to the MTM method. This neural network employs ten kinematic observable quantities to separate signal from backgrounds: several combinations of the transverse energy of the measured objects, plus some shape variables.

The output of the neural network is shown above, for different top-quark simulations and for the main backgrounds. Top events tend to peak at high values of the NN discriminant. The discriminant is used to cook up a likelihood function, and on the latter a selection retains 918 events, where about seven hundred are from top-pair decay. It is this latter sample which provides the final measurement.

Results and Conclusions

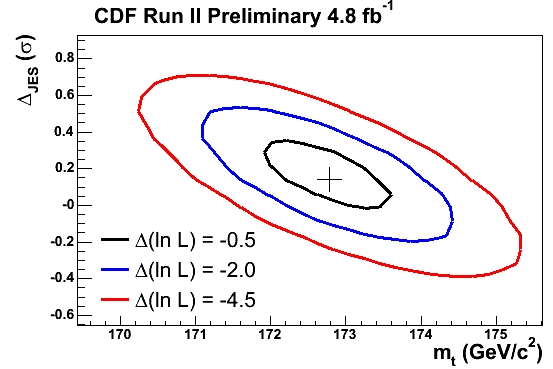

The maximization of the final likelihood results in a top quark mass of 172.8 +- 0.7 +- 0.6 +- 0.8 GeV, where errors have been separated according to three sources: respectively statistical, systematics due to the residual jet-energy scale uncertainty, and other systematical effects. This is a total 1.3 GeV uncertainty! It is thus a better than 0.8% measurement, and the best single top mass measurement ever achieved. You can see the result above, plotted in the plane of the two fitted variables, the top mass (on the x axis) and the jet energy scale (in standard deviations from the expected value, on the y axis). The innermost ellipse draw a 1-sigma contour in the value of the two estimated parameters.

The maximization of the final likelihood results in a top quark mass of 172.8 +- 0.7 +- 0.6 +- 0.8 GeV, where errors have been separated according to three sources: respectively statistical, systematics due to the residual jet-energy scale uncertainty, and other systematical effects. This is a total 1.3 GeV uncertainty! It is thus a better than 0.8% measurement, and the best single top mass measurement ever achieved. You can see the result above, plotted in the plane of the two fitted variables, the top mass (on the x axis) and the jet energy scale (in standard deviations from the expected value, on the y axis). The innermost ellipse draw a 1-sigma contour in the value of the two estimated parameters.It will be very nice to see this result combined with the other world-class measurements performed by CDF and DZERO in a single world average. I believe the final result will reach a 1 GeV uncertainty, and this will be a splendid advancement of human knowledge.

It only remains to congratulate the authors for this important new result: Lina Galtieri, Pat Lujan, Jason Nielsen, John Freeman, and Igor Volobouev. Way to go guys!

Further Reading

Those interested in the details of the measurement will find more than they bargained for in the public web page of the top mass measurements by the CDF experiment.

A look at how DZERO is doing on the same physics can be given in their own public web page here.

Previous discussions of the Tevatron top mass measurements in this blog are available at the following links:

A discussion of the previous instance of this analysis.

List of articles on the top quark mass in the old blog.

Comments