Scalars and vectors, dot and cross products, div, grad, curl and all that are old friends, defined in the first steps to more than one spatial dimension. In this blog, those definitions will be reviewed. The familiar will be compared to quaternion products where all of these structures were first seen. Yet the constraint that quaternion products remain quaternions puts an odd slant on our familiar friends.

Scalars are the numbers we first learn about. The numbers of blocks is a scalar, as is one's weight on a scale. A scalar is how big something is. Physicists generalize the idea by using either a real or a complex number. Which one gets used depends on the situation.

[clarification: one can get a bit fancier and define a basis vector for a scalar, call it e0. It is only in physics graduate school that one entertains values of e0 other than one. This basis vector only has dimension, but does not have direction. By that I mean nothing happens to its value in a mirror.]

A vector has both a magnitude and a direction. Vectors are pointy. Here one first learns how nuanced definitions can be in mathematics. There is no one right way to point. Instead one provides a basis vector to do the work of pointing. A vector in n dimensions can be defined like so:

Take a scalar, big or small, real or complex, and multiply it with the vector to make a new vector, resized. [note: added e0's]

Two scalars can be added together to make a new scalar. Two vectors with the same set of basis vectors can be added together to make a new vector.

The dot product takes two vectors with the same basis vectors and returns a scalar. Technical jargon can be confusing at this point since physicists often speak of scalars as numbers that don't change under a transformation. Instead, I will consistently use the earlier definition: one number with no pointiness.

When introduced to students, the basis vectors invariably have a particular property: the basis vectors are orthonormal. The ortho indicates that the dot product between any two different basis vectors happens to be zero. The normal indicates that the square of any basis vector is unity. The dot product between two vectors V and W is:

There was no need to write down e12 since that evaluates to unity. There is a simple interpretation of the dot product: it represents the cosine of the angle between the two vectors.

The basis vectors span the vector space. All possible vectors in the vector space can be described by a combination of scalars and the orthonormal basis vectors.

The basis vectors need not be orthogonal, nor must they be normal. Changing the basis vectors changes the details of calculating the dot product, making things more complicated. The job is accomplished by using a metric tensor. The elements down the diagonal are for the squared basis vectors. The off-diagonal terms provide rules for the dot product between two different basis vectors. I plan on going into this subject more next week.

The cross product of two vectors generates a third vector. The length of that vector is equal to the area of a parallelogram created by the two vectors. If the vectors point in the same direction, there is no area, and thus the cross product is zero. There is a simple interpretation of the cross product: it represents the sine of the angle between the two vectors. If the angle is zero, then the sine is zero. A sine is an odd function. One manifestation of that property is that reversing the order of the two vectors in a cross product changes the sign.[clarification: if one uses two polar vectors - they are vectors that in a mirror still point the same direction - then the cross product is an axial or pseudo vector, it flips signs. In three dimensions with orthonormal coordinates, the cross product is:

In higher dimensions, the magnitude of the cross product is known from the sine calculation. One set of values for the cross product cannot be pinpointed, so the meaning is more difficult to track down. That subject is beyond the scope of this blog.]

If the cross product is about sines while the dot product is about cosines, there should be a link between the two.

This is Lagrange's identity.

*** Start Historical Interlude ***

Wikipedia appears to give credit for the jargon of vector analysis to Prof. Gibbs of Yale and thermodynamics fame. I recall reading that it was Hamilton who coined the jargon as he dissected quaternion products. Certainly one person came up with the word dot product and the corresponding divergence, as well as the cross product and its calculus clone the curl. I don't spelunk into math history and thus don't have access to primary source documents.

Hamilton had searched in vain for a way to multiply and divide triples of numbers for a decade. The day after his discovery of quaternions, he defined "pure quaternions," those whose first term is zero so that the result is a triple. He abandon the inherent scalar + 3-vector nature of quaternions since it did not fit his expectation. This is a common error, one I am guilty of too.

*** End Historical Interlude ***

Quaternions are all about automorphisms. Start with a quaternion and end with a quaternion no matter what has happened in between. It is like everyone (almost) says: there's no place like spacetime, there's no place like spacetime, there's no place like spacetime. Something starts in spacetime and that's where it ends, be it a big collection of events like a supernova exploding, or an electron absorbing a photon. My life starts in spacetime and that's where it will end. On a small scale, the automorphisms are reversible. Reversibility is a reason I cling to division, multiplication in reverse.

What is multiplication? I learned about multiplication using blocks:

I could count them: 1, 2, 3, 4. [Note: these are the blocks of a real three year old and the block for the number four is misplaced.] The next step was to group them and multiply.

This is the cliché of multiplication: 2x2=4.

There is a different way to line up the boxes so now they make up an area.

Two times two still equals four, but now the repetitive addition applies to a plane.

Go up one more dimension to describe a volume:

I could count all eight, or form three groups of two and form the product: 2x2x2=8.

This is trivial for readers here at Science 2.0. It is the next step that gets fun. How do you go up one more dimension? I cannot do it with blocks...or maybe I can so long as I view the blocks as moving through time as well as space. Repetitive addition in spacetime leads to an object moving at a constant velocity.

It is the appeal of logical consistency that keeps me close to quaternions. If repetitive addition is how I understand multiplication for lines, areas, and volumes, the same should apply in spacetime. I have been able to see lines, areas, and volumes that move with my quaternion animation software working with two, three, and four parameters respectively.

Look at the four vector algebra operations - scalar * scalar, scalar * vector, dot and cross product - from a quaternion product automorphism perspective

One can pluck out each of these. This is what I expected to see based on my training in vector algebra.

If the quaternion product is viewed as an automorphism - start with two quaternions and end with a quaternion - then any and all operations that are part of a quaternion product must share this quality. If one wants to look only at the dot product, that would be done like so:

One could complain about the complexity of this quaternion expression. Yet it is a result of the request to look at only three of the sixteen terms of a quaternion product. Note the zero [quaternion] vector, required so that the result has all the properties of a quaternion.

I can no longer honestly say there is a vector dot product in a quaternion product. A vector dot product as defined earlier in this blog leaves a [quaternion] vector undefined. The quaternion dot product - a distinct beast - requires the quaternion vector with zeros. Undefined is different from zero. Quaternions also constrain the number of dimensions in play. A vector dot product can work in an arbitrary number of dimensions with is not the case for quaternions [which has only four dimensions].

The same holds true for a [quaternion] scalar times a [quaternion] scalar. In vector algebra, the product is the end of the story. With quaternion automorphisms, the quaternion scalar sits besides three zeros, and the product has three zeros so that it too is a quaternion. The quaternion scalar is constrained to be a real number, while the 3-vectors are imaginary.

A [quaternion] scalar times a [quaternion] vector looks like so:

In vector algebra, there is no notion about keeping a place at the table for a [quaternion] scalar. With quaternion automorphisms, the spot is saved automatically.

A [quaternion] vector cross product is all about [quaternion] vectors. With quaternions, the silent scalar has a role. [Clarification: if one has "normal" polar vectors as the quaternion vector, then the cross product is an axial quaternion vector. This is a happy accident of 3D quaternion vectors, and the issue of a cross product in more or less dimensions is beyond the scope of this blog.]

The elephant that lives in my room are the relationships between these various parts. With quaternions the relationships are fixed so that one can multiply and divide them. In vector analysis, it is divide and conquer. Vector analysis has crushed quaternions, that is the history. I will continue riding the real and imaginary elephant wherever it goes.

One can see the difference between vector algebra and quaternion automorphisms. I chose two quaternion polynomials pretty much at random and formed their product. Here were the polynomials with the parameters (a, b, c, d):

Both have a cubic term, V with x, W with y. The W polynomial also has a squared term in x. Here is the animation using just the parameter a:

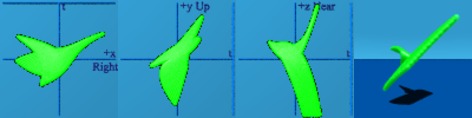

The s-shaped curve indicates a cubic, while the parabola indicates the square. So the yellow is the function V while the red is W. One can now form the product of these two polynomials, color it in green:

Apparently the first term is always negative for the numbers created by this product.

Here is just the product, in isolation, with the dot product in orange and the cross product in pink.

The dot product sits at the spatial center of the animation, while the cross product blinks on the screen for one frame where t=0.

To save some of the commenters the trouble, this was a random pair of polynomials forming just as random a product. I don't claim this maps to anything physicists study. Hopefully some will recognize the potential power with these initial doodles. Almost any function can be constructed out of a polynomial series, including those that are the subject of study in physics.

[To point out an example, one can study the sine function which can be written as a polynomial expression. The sine function is relevant to the description of numerous physical systems.]

The same darn polynomials can be used with two parameters to create a world of strings.

The square and cubic aspects of the polynomials are less clear.

One can still form a product:

The green string is doing quite a bit more as can be seen when isolated:

This is vaguely bird-like.

I did spend a few hours generating the three and four parameter animations, which involve membranes and solids, but I don't think they will add to the discussion. There are readers who are vocally against any of these animations. Most readers probably just scratch their heads since the animations don't map to subjects studied in physics or math. This work gives me some hope that all the way brighter folks playing with strings do not represent a complete waste of time and money.

Doug

Snarky Puzzle: I learned if you cross product is zero, then you have nothing but dot product, and visa versa. Is that always true? Worry about the 2 parameter situation...

Next Monday/Tuesday: Scalars, Vectors, and Quaternions: Life Without Orthonormal Basis Vectors (2/2)

[Note: all definitions written in terms of components relied on the explicit assumption that the basis vectors were orthonormal. All that were written with dot products and curls should remain unchanged if the basis vectors are no longer orthonormal. The blog will make an effort to address that issue.]

Comments